About the guide

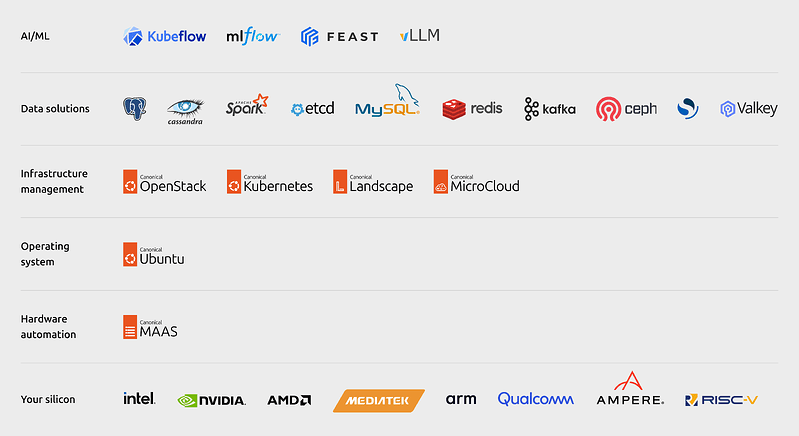

Managing the complexity of private AI infrastructure can be a challenging proposition. It requires a tightly integrated ecosystem spanning specialized silicon, networking, container orchestration, data, MLOps software, and more.

Open source software provides a transparent and flexible foundation for this architecture – particularly given the critical role of Kubernetes in AI workloads and the prevalence of open source software across the AI landscape. As such, using a unified, end-to-end open source stack represents the most logical path to enterprise-grade AI infrastructure.

Building AI infrastructure on Ubuntu enables organizations to take advantage of Canonical’s extensive partner ecosystem – which includes industry leaders such as NVIDIA, Intel, and AMD – to solve the notorious hardware enablement problem and freely choose the optimal silicon for their use case.

Download the guide to:

- explore different layers of AI infrastructure,

- examine the benefits and applications of public, private and hybrid clouds for AI workloads,

- and get the blueprint to build a private cloud for AI using Canonical’s open source stack.